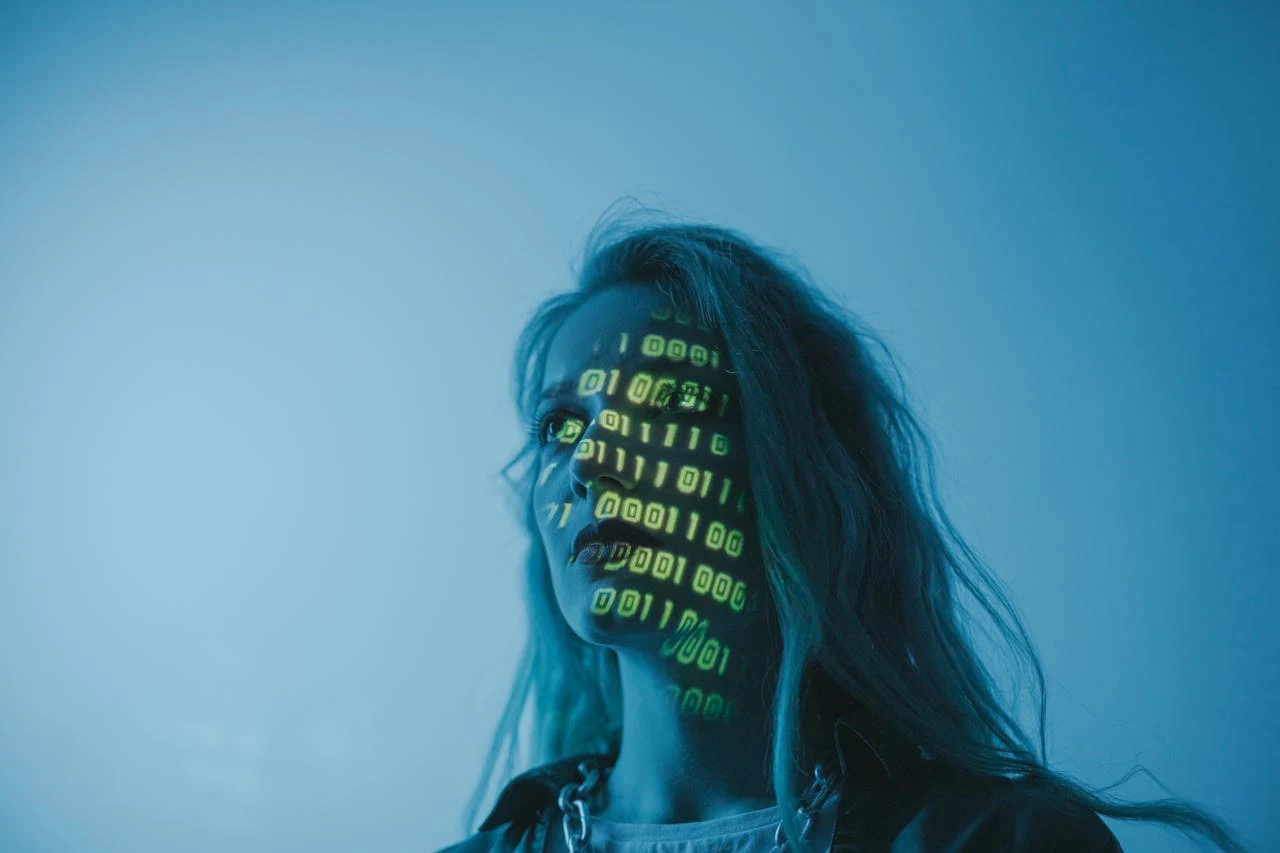

The Indian government has proposed significant amendments to the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021, aimed at curbing the misuse of deepfakes and generative AI content. Here's a breakdown of the key changes:

New IT Rules Targeting Deepfakes (2025 Draft Amendments)

- Definition Introduced

Synthetically Generated Information: Defined as content created, altered, or modified using computer tools to appear real. - Mandatory Labelling

Platforms with over 5 million users (like Facebook, YouTube, Instagram) must:- Ask users to declare if uploaded content is synthetic.

- Take reasonable steps to verify these claims.

- Clearly label synthetic content with visible or audible markers.

- For videos: markers must cover at least 10% of the screen.

- For audio: markers must be present in the first 10% of the clip.

- These markers cannot be removed or altered.

- Legal Protections

Platforms acting in good faith to remove or block synthetic content will receive legal protection. - Takedown Oversight

Only senior officers (Joint Secretary or above) can issue takedown orders.

All takedown actions will undergo monthly review by a Secretary-level officer to ensure legality and proportionality. - Timeline & Feedback

Draft rules were released on October 22, 2025.

Public feedback is open until November 6, 2025.

Final rules are expected to take effect from November 1, 2025.

IndianWeb2.com is an independent digital media platform for business, entrepreneurship, science, technology, startups, gadgets and climate change news & reviews.

IndianWeb2.com is an independent digital media platform for business, entrepreneurship, science, technology, startups, gadgets and climate change news & reviews.